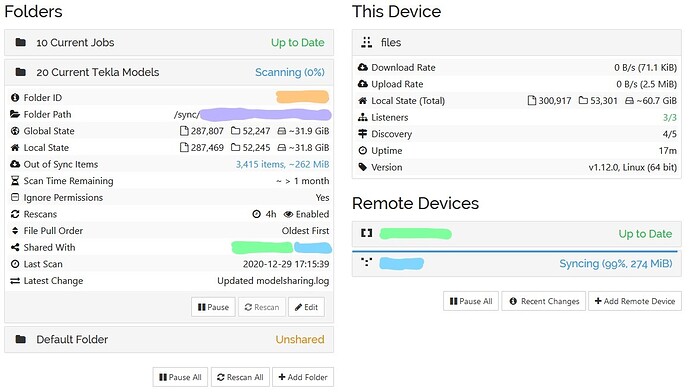

Actually loading or reloading the GUI drives the mem usage up to 520 MB. I'd say this is acceptable, but a bit on the high side. With v0.9.1 at idle (syncthing having done nothing for several minutes this is relevant, more about that later) syncthing uses about 110 MB of RAM.

I created a test repo with 200 GB in 250,000 files and measured memory usage. Some more info here, since I've done some testing. There I assume using librsync might be a better option.

I assume the CPU usage is hashing lots of files. Moving that index to disk would also make a lot more sense. On average (depends on filename sizes) that should only be 50-60 bytes per item which would only be 13MB. The index should only really need to store filename, parent, permissions, size and timestamp. 700MB is 2.6kb per item on disk, which seems way too high. Without looking at the code I assume an index is being kept in memory for all the repository contents. At the current level of usage that would require ~8GB of memory just for syncthing.

A typical NAS server like the two I have holds >4TB of storage. While the CPU usage I could manage during the initial sync the memory usage is simply too high. To sync these three repositories syncthing 0.9.0 uses a bit over 700MB of RAM and while syncing continuously pegs the CPU at 150% on all nodes.

My repositories have the following sizes: I've been testing syncthing across 3 machines, a laptop with 8GB of RAM and two NAS-style servers with 1GB and 2GB of RAM.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed